LexStack vs building your own legal RAG pipeline: an honest comparison

7 Min Read

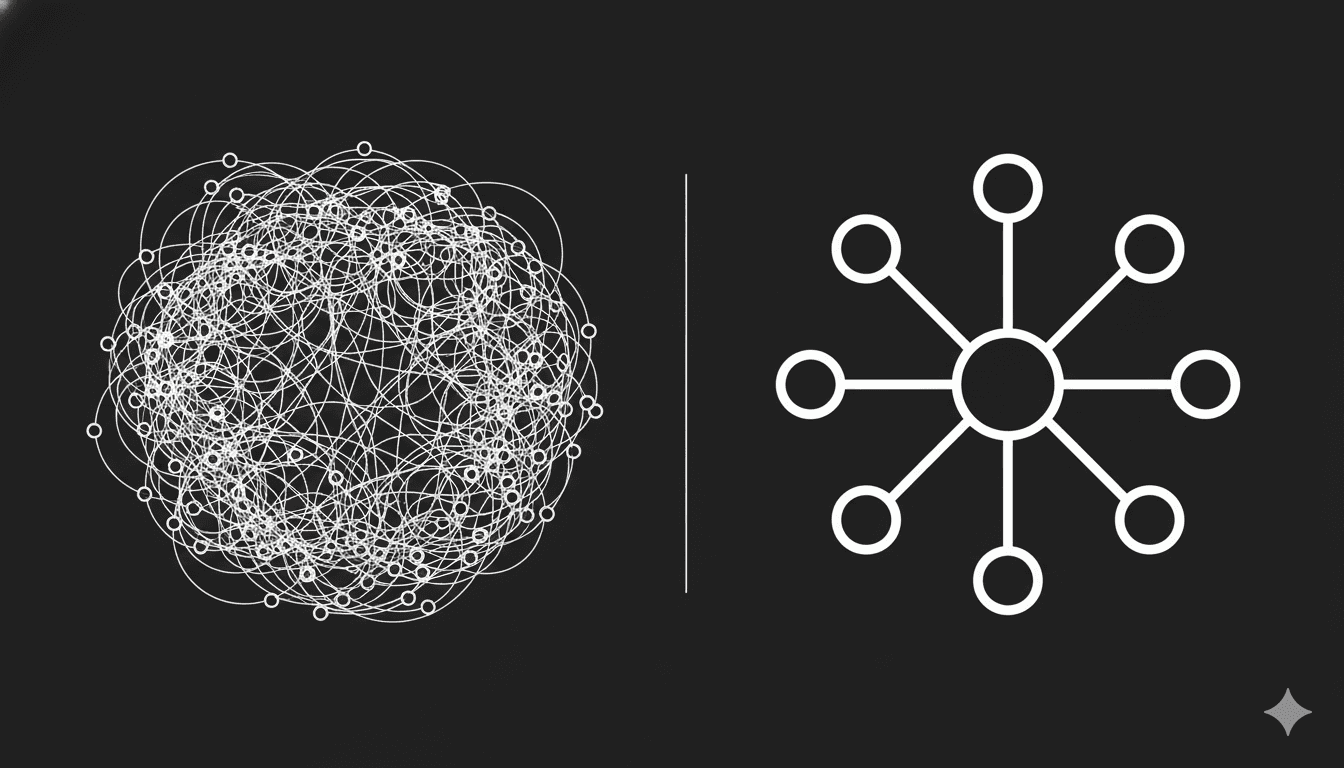

Building your own RAG pipeline for legal AI is not that hard. The first version takes a few days. The problem is everything that comes after the first version.

This post is not a sales pitch. It is an honest accounting of what teams typically build when they roll their own legal AI infrastructure, what breaks, and where the actual complexity lives. The goal is to help you make a clear decision about where to spend your engineering time.

The real cost of building your own is not the initial build. It is the ongoing maintenance of a system that needs to keep working as your documents, your queries, and your LLMs change.

What you need to build for a production legal RAG system

A production-grade legal RAG system is not just a vector store and an LLM call. Here is what most teams end up building over time:

PDF ingestion and chunking

Legal PDFs are not clean. They have multi-column layouts, header and footer text that bleeds into content, scanned pages, complex tables, and cross-references. A chunker that works on blog posts will not work reliably on a 300-page contract. You need layout-aware chunking that preserves structure and ideally extracts bounding box metadata for citation highlighting.

Building time: 1 to 2 weeks to get right on real documents. Ongoing: breaks on new document formats.

Hybrid retrieval

Semantic-only retrieval misses exact clause references. BM25-only retrieval misses semantic intent. You need both, merged with a ranking strategy that handles the overlap. This means running two retrieval systems, managing their indices, and building a merge layer.

Building time: 3 to 5 days. Ongoing: tuning the merge weights as your query distribution shifts.

Citation handling

Returning an answer is easy. Returning an answer with exact source references, page numbers, and bounding boxes that a UI can highlight is not. You need to persist chunk metadata through the retrieval pipeline, attach it to streamed responses, and ensure the references stay accurate as you summarise and rerank.

Building time: 1 week. Ongoing: breaks when you change your chunking strategy.

Linked document awareness

Contracts reference other contracts. A system that cannot follow those references gives incomplete answers on a significant portion of real-world queries. Building cross-document retrieval means maintaining document relationship graphs, resolving references at query time, and managing the context window implications.

Building time: 1 to 2 weeks. Ongoing: document relationship management as your corpus grows.

Agent routing

A single retrieval path is not enough for the variety of questions legal documents generate. You need an agent that can decide which retrieval strategy to use per question, maintain multi-turn conversation state, and handle follow-up questions without losing context.

Building time: 3 to 5 days with LangGraph. Ongoing: prompt engineering as models change.

Evaluation infrastructure

Without evaluation you cannot trust your system or know when it degrades. Building a legal-specific evaluation layer means defining metrics, constructing datasets, writing the scoring logic, and wiring it into CI. This is the part most teams skip — and then regret six months later.

Building time: 2 to 3 weeks for a meaningful eval suite. Ongoing: dataset maintenance as your system evolves.

Observability

When something goes wrong in production, you need to trace what was retrieved, what prompt was used, and where reasoning diverged. Without instrumentation you are debugging from user reports.

Building time: 2 to 3 days. Ongoing: low, but requires initial investment.

The honest total

If you are building all of this well, you are looking at 6 to 10 weeks of engineering time before you have a production-grade system. That is not including the time to learn the edge cases in each component — the PDF layouts that break your chunker, the query patterns that expose your retrieval gaps, the model changes that degrade your prompt performance.

Most teams underestimate this by a factor of three. They build the happy path in a week and spend the next two months handling everything else.

Where LexStack fits

LexStack is not a replacement for all of this. It is a replacement for the parts you should not have to rebuild.

LexReviewer handles the ingestion pipeline, hybrid retrieval, citation infrastructure, linked document awareness, agent routing, chat history, and observability. It is open source, so you can read every line, extend any component, and self-host the whole thing.

MicroEvals handles the evaluation layer — metrics, datasets, CLI, CI integration — for the legal-specific failure modes that matter.

Law MCP handles structured access to external legal sources so your agent does not have to scrape or maintain its own legal data pipeline.

When to build your own anyway

LexStack is not the right choice for every team. Build your own if:

and require custom chunking logic that does not generalise Your document types are highly specialised

and the hybrid retrieval architecture does not match your query patterns Your retrieval requirements are unique

that prevent using any open-source dependencies in your stack You have compliance constraints

and have the engineering bandwidth to maintain it You want to own every line of code

For most teams building legal AI, none of those conditions apply. The question is not whether you can build this infrastructure — you can. The question is whether building it is the best use of your engineering time when the alternative is shipping the actual product six to ten weeks sooner.

A practical starting point

The fastest way to evaluate whether LexStack fits your needs is to run LexReviewer locally against a real document from your use case. The setup takes under an hour. If it handles your document types, your query patterns, and your citation requirements, you have saved weeks of infrastructure work. If it does not, you have a clear picture of what you need to build custom.

Repo: LexReviewer

LexStack is open-source infrastructure for legal AI. It includes LexReviewer for document RAG, Law MCP for structured legal tools, and MicroEvals for CI-native evaluation.